Claim & start

Business Data & Market Intelligence

How to Build a Competitor Price Monitoring Strategy (Without IP Bans)

In the fast-paced US e-commerce landscape, launching a product with the perfect price is only half the battle. Within hours, competitors will adjust their margins and deploy algorithmic repricing. Discover how leading data teams build scalable competitor price monitoring strategies, and how to use residential proxy networks to perform continuous retail price scraping without triggering anti-bot systems or IP bans.

Table of contents

In the highly competitive US e-commerce sector, setting your product pricing is no longer a quarterly boardroom decision—it is an algorithmic war fought in real-time. You might launch a product with the perfect profit margin on Monday, only to watch your sales plummet by Wednesday because a major competitor quietly dropped their price by 5%.

If your team is still relying on manual checks, or using outdated spreadsheets to track competitor SKUs, you are operating with a massive blind spot. By the time you notice a market shift, you have already lost market share.

To survive, modern brands require continuous, automated competitor price monitoring. However, acquiring this data presents a significant engineering challenge. Major retailers invest millions in anti-bot security systems specifically designed to block automated data collection. If you attempt to run a basic scraper, your server's IP address will be banned within minutes.

To win the pricing war, you need a strategy that combines smart data extraction with a robust residential proxy network. In this guide, we will break down the business value of automated pricing intelligence, why retailers block standard scrapers, and the exact infrastructure you need to extract accurate retail data safely.

Key Takeaways (TL;DR)

- The Definition: Competitor price monitoring is the automated process of extracting and analyzing competitor pricing, stock levels, and promotions in real-time to inform your own dynamic pricing strategy.

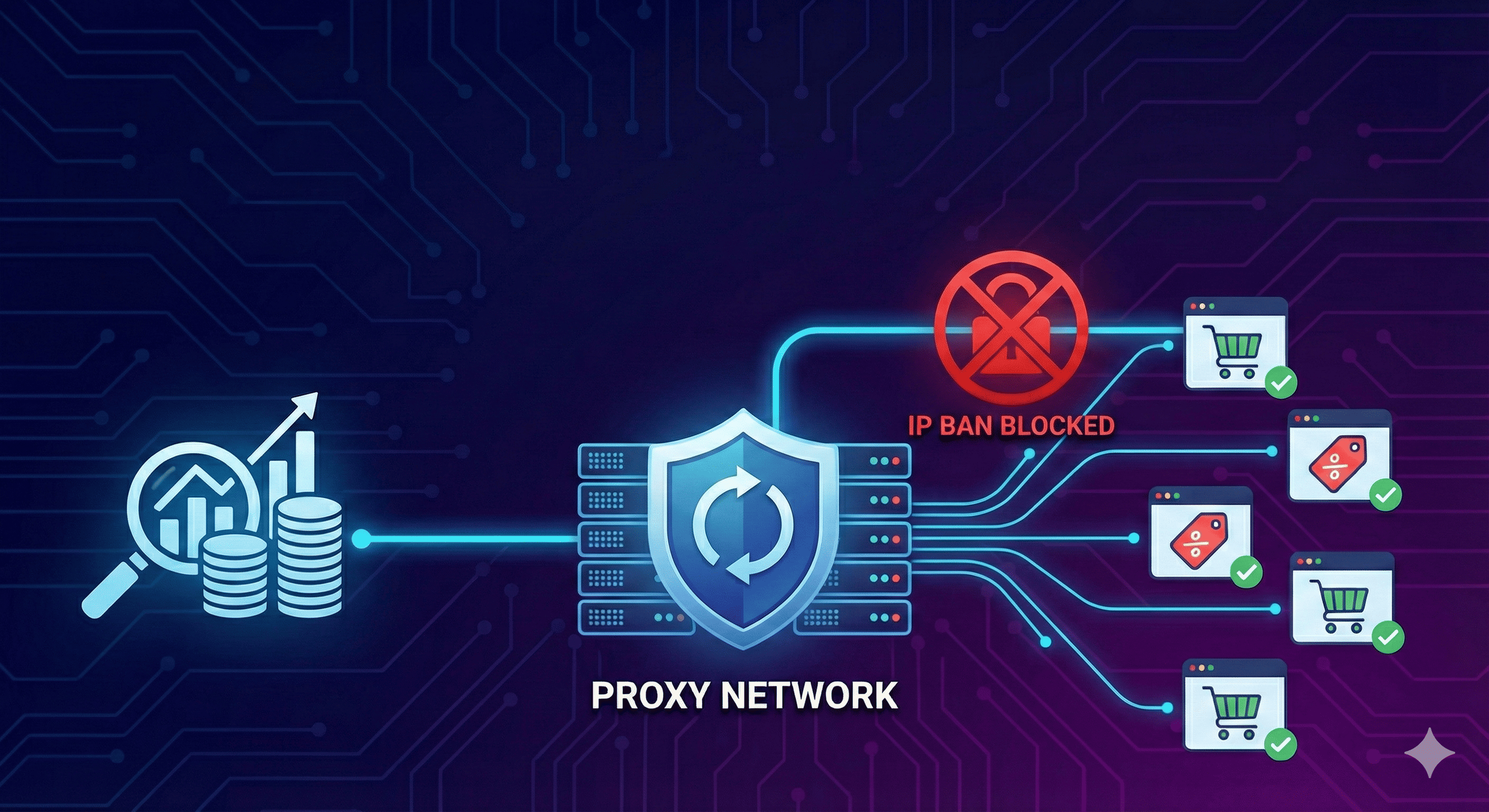

- The Engineering Barrier: Major US retailers (like Amazon, Walmart, and Target) use advanced anti-bot firewalls to block data center IPs and prevent automated retail price scraping.

- The Solution: To extract pricing data at scale without getting blocked or served fake data, data engineering teams must route their requests through a rotating network of real, residential IP addresses.

- Data Sovereignty: Building your own scraping architecture backed by premium proxy infrastructure is ultimately more scalable, flexible, and cost-effective than renting rigid, third-party pricing dashboards.

The Business Case for E-Commerce Data Extraction

Implementing a robust price tracking infrastructure goes far beyond simply knowing what the other guy is charging. When executed correctly, e-commerce data extraction directly impacts your bottom line in three critical ways.

1. Fueling Dynamic Pricing Strategies

The most successful online retailers do not have static prices. They use dynamic pricing algorithms that automatically adjust product costs based on market demand, inventory levels, and competitor movements. To feed these algorithms, you need an uninterrupted, hourly stream of accurate market data. If your scraper gets blocked, your algorithm freezes, and you either lose sales or sacrifice margin.

2. Protecting Profit Margins

Price monitoring is not just about a race to the bottom. Often, it reveals opportunities to raise your prices. If your scraping engine detects that your top three competitors have just run out of stock on a highly sought-after item, your algorithm can instantly raise your price by 10%, maximizing your profit margin while you hold a temporary monopoly.

3. Regional Pricing Intelligence

In the US market, prices are rarely national. A retailer might charge $45 for a product in a New York zip code, but $38 for the exact same product in Ohio to account for shipping logistics or local competition. To map this landscape accurately, your infrastructure must be capable of localized scraping.

The Engineering Reality: Why Retailers Block You

If the business case is so clear, why isn't every brand doing it? Because extracting data from modern e-commerce sites is an uphill architectural battle.

Retailers view their pricing data as highly proprietary. They utilize advanced Web Application Firewalls (WAFs) like Cloudflare, DataDome, and Akamai to protect their sites. If you spin up a standard Python scraper on an AWS or DigitalOcean server, the retailer's security system will instantly recognize that the request is coming from a data center, not a real human browser.

The result? You will be served a CAPTCHA, receive a 403 Forbidden error, or worse, the retailer might serve you poisoned data (fake prices designed to ruin your internal algorithms) without you even realizing it.

Engineering Note: Avoid the trap of using data center IPs for retail scraping. E-commerce firewalls block data center subnets by default. To bypass these security layers and access true, localized pricing data, your engineering team must route their scraping requests through a premium residential proxy network like MagneticProxy, which utilizes real ISP connections that appear entirely human to security algorithms.

How to Architect a Scalable Price Scraping Engine

To build a retail price scraping engine that actually works in a production environment, you need to upgrade your infrastructure. Here is the blueprint data teams use to achieve stealth and scale.

1. Shift to Rotating Residential Proxies

Instead of sending requests from a single server IP, you must route your traffic through residential proxy networks. These are IP addresses assigned by actual Internet Service Providers (ISPs) to real homes across the United States. When your scraper requests a product page via a residential proxy, the retailer's firewall sees what looks like a normal consumer browsing on their home Wi-Fi. By rotating these IPs with every request, you completely bypass rate limits and IP bans.

2. Implement Geo-Targeting

To solve the regional pricing problem, your proxy infrastructure must allow for state, city, or even ASN-level targeting. This allows your scraper to effectively "teleport" across the country, querying the retailer's website to see the exact price shown to a customer in Chicago, followed immediately by the price shown to a customer in Miami.

3. Handle JavaScript Rendering

Modern e-commerce sites rarely load prices in plain HTML; they render them dynamically using JavaScript. Your architecture must combine your proxy network with headless browser automation (like Puppeteer or Playwright) to fully render the page, execute the JavaScript, and extract the accurate price tag before the connection is closed.

Build vs. Buy: Owning Your Pricing Data

Many brands initially turn to "black box" SaaS pricing tools because they seem easier to implement. However, as your company grows, the limitations of these tools become severe bottlenecks. They often charge exorbitant fees per SKU, restrict how frequently you can update data, and limit the specific competitors you are allowed to track.

By building your own internal competitor price monitoring pipeline, you achieve total data sovereignty. You control the frequency, the targets, and the analytics.

The only missing piece to building your own engine is the network infrastructure. By plugging a highly reliable proxy API into your custom code, your data team can focus on extracting market intelligence and building revenue-driving algorithms, rather than constantly fighting IP bans.

Ready to scale your retail data extraction without getting blocked? Deploy the MagneticProxy residential network and start scraping safely today.

Latest Posts

Here’s how Profile Peeker enables organizations to transform profile data into business opportunities.